How to build a disaster recovery plan for your data warehouse

Table of Contents

TL; DR

- Disaster recovery (DR) in data warehouses ensures operations can resume quickly after failures by restoring data and infrastructure

- Disasters include hardware failures, power outages, natural disasters, human error, and large-scale outages like the 2024 CrowdStrike incident

- Effective backup strategies combine full, incremental, and differential backups, stored securely and verified regularly

- Cloud vs. on-premises approaches differ in redundancy, cost, and responsibility; hybrid and multi-cloud setups improve resilience but add complexity

- A solid DR plan requires regular testing, clear roles, documented runbooks, and scenario-based simulations to measure performance against business objectives

- With the right approach, outages become temporary setbacks rather than full-blown crises

Imagine your entire data warehouse goes offline right before a critical board meeting. Sales dashboards blank out, AI models stop mid-run, and teams scramble without data. In July 2024, Delta Airlines experienced this kind of disruption during the CrowdStrike outage. Nearly 7,000 flights were canceled, more than 1.3 million passengers were stranded, and losses topped $500 million because recovery systems didn’t respond quickly enough.

Disaster recovery (DR) planning is designed to prevent this outcome. Data warehouse disaster recovery is the set of practices that ensure you can restore data quickly and comprehensively after a failure. Two metrics define success:

- RTO (Recovery Time Objective): how fast you can resume operations

- RPO (Recovery Point Objective): how much data (in time) you can afford to lose

Every enterprise needs a plan to back up and restore critical warehouses. In the next sections, we’ll look at how to build that plan—starting with understanding what you are protecting against.

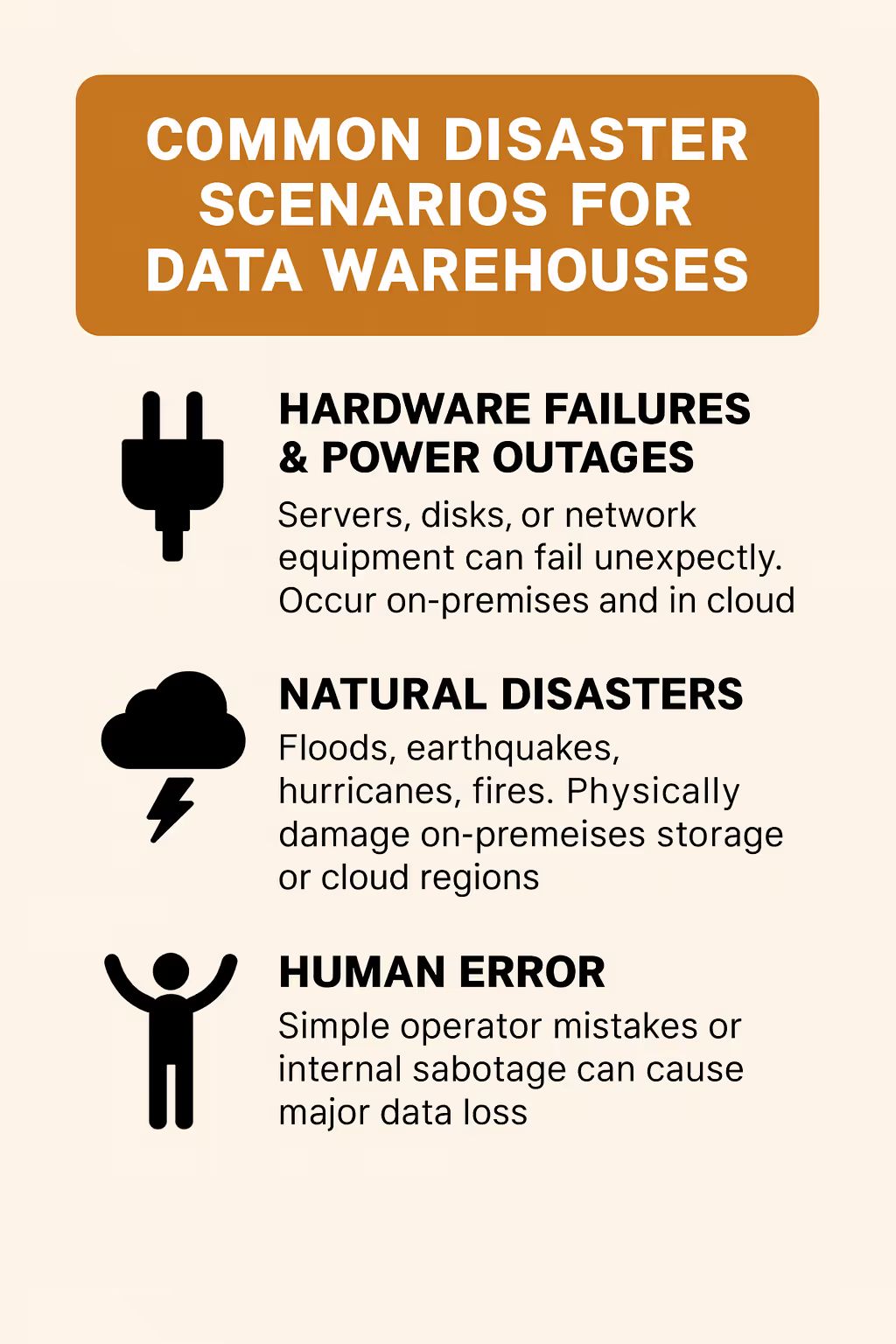

3 Common disaster scenarios for data warehouses

Disasters come in many forms, and a strong recovery plan must account for both predictable and unexpected failures.

Take, for example, the CrowdStrike outage in July 2024. A faulty security update crashed over 8.5 million Windows devices worldwide, disrupting operations across industries.

For many organizations, the impact was compounded by simultaneous issues with Microsoft Azure, leading to days of downtime and an estimated $5.4 billion in losses among the top 500 U.S. companies.

Beyond such headline-making failures, data warehouses face a wide range of risks:

- Hardware failures & power outages: Servers, disks, or network equipment can fail unexpectedly. Power grid failures or cooling system outages can also bring down on-premises warehouses. Even in the cloud, zone or region failures happen

- Natural disasters: Floods, earthquakes, hurricanes, and fires can physically damage data center facilities. Any regional disaster can cut off access to your on-prem systems or even cloud regions. If your backups are in the same affected area, that’s a problem

- Human error: One of the leading causes of downtime is simple mistakes. Accidental deletion of tables, dropping the wrong database, or misconfiguring infrastructure can all cause major data loss. Internal sabotage is rare but possible—a disgruntled admin could deliberately wipe or corrupt data

Also read: 7 Trusted Snowflake Alternatives

5 Backup strategies for data warehouses

The average cost of downtime has inched as high as $9,000 per minute for large organizations. For higher-risk enterprises like finance and healthcare, downtime can eclipse $5 million an hour.

At the heart of any disaster recovery strategy are backups. If your data is not backed up, you simply cannot recover it when things go wrong. But not all backups are equal.

Let’s break down effective backup strategies for data warehouses, including types of backups, frequency, and retention, as well as how they relate to the all-important RTO and RPO targets.

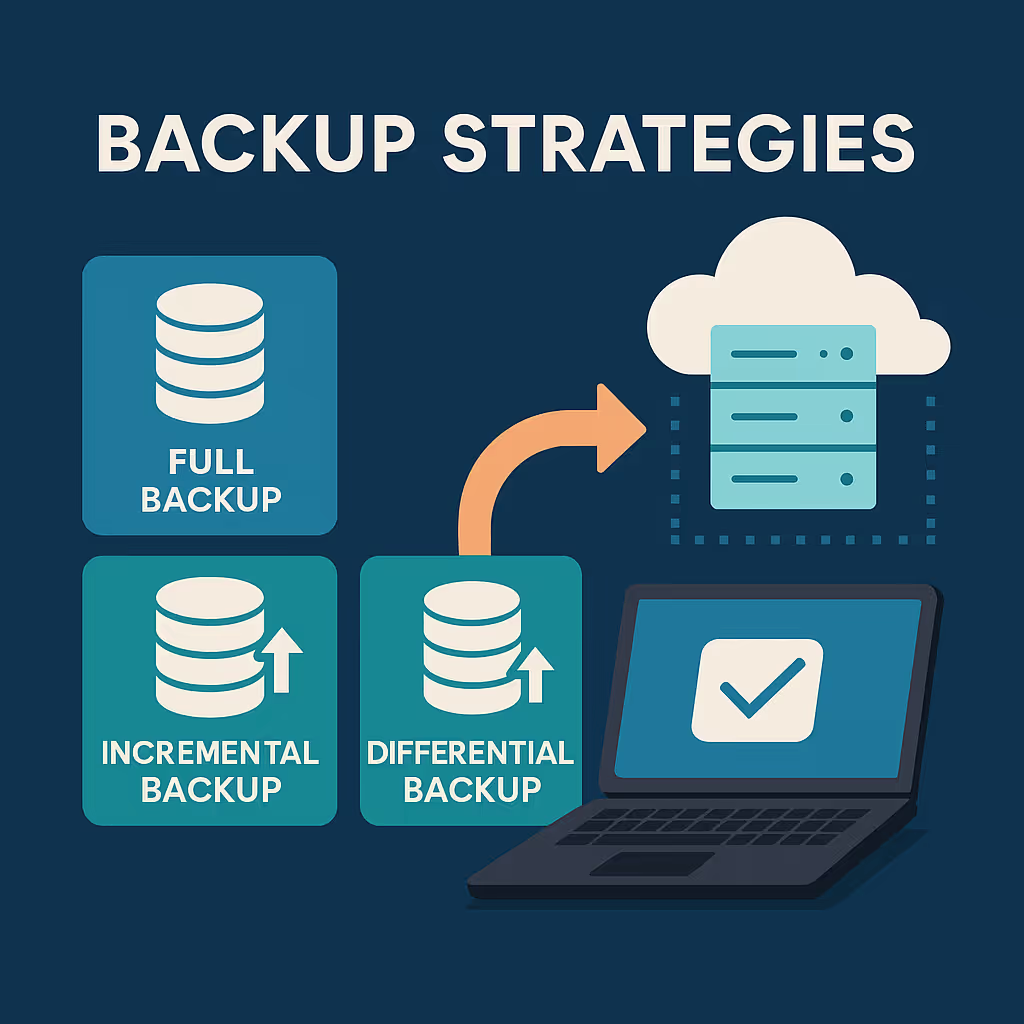

#1 Choose the right backup types

Most teams use a combination of full, incremental, and differential backups:

- Full backups: a complete copy of your entire data warehouse at a point in time. This is the gold master for recovery, but it’s time-consuming and storage-intensive. Typically done weekly or monthly due to size

- Incremental backups: save only the data that changed since the last backup (of any type). These are faster and use less storage, so you can run them frequently (daily or even hourly). However, restoring can be slower since you’ll apply a chain of incrementals on top of the last full

- Differential backups: save changes since the last full backup. Differentials strike a middle ground: larger than incrementals, but faster to restore (only two pieces: last full + last differential)

The goal is to minimize potential data loss (meet your RPO, Recovery Point Objective) while balancing storage and performance.

For example, you might do a weekly full backup with daily differentials, giving you an RPO of 24 hours. If you need a tighter RPO, you could do full backups weekly and incrementals hourly, or even use continuous backup features if your warehouse supports it.

Also read: How to Handle Data Loss During Migration: A Comprehensive Guide

#2 Store backups in separate, secure locations

Always store backups off-site or in the cloud away from your primary warehouse location. For on-premises warehouses, that might mean sending backups to a cloud storage bucket or a remote data center.

In the cloud, consider cross-region replication: e.g. copy your backups from US-East to US-West region. This protects against a regional outage. Also ensure backups are secure—encrypted at rest and in transit, with access controls. The nightmare scenario is discovering your backup files were corrupted or deleted by an attacker right when you need them.

#3 Automate and verify backups

Backups should not rely on manual triggers. Implement automated backup jobs or use managed backup services so that nothing is left to chance (or an overlooked cron job). Backup verification is equally critical: you must routinely test that backups can be restored successfully.

It’s sadly common that organizations dutifully take backups but never try restoring them until a disaster hits – only to find the backups were incomplete or corrupted.

To avoid this, do things like: restore a backup copy to a staging warehouse and run data validation checks, or use backup tools that perform checksum integrity checks. Verification ensures your backups meet the integrity and usability requirements for a healthy backup process.

#4 Define retention policies

How long will you keep backup copies? Long enough to recover from slow-burning disasters. For example, if corruption went unnoticed for a week, you’d need backups older than a week to recover a clean state. Many companies keep daily/weekly backups for 30 days, and monthly or quarterly full backups for a year or more. Compliance requirements may dictate retention (e.g. financial data backups retained for 7 years).

Also read: Best Data Governance Tools

#5 Consider backup consistency and scope

In a data warehouse, data is often flowing in from multiple sources. Are you backing up just the raw tables, or transformed tables, or also metadata (like BI dashboards, code, etc.)?

A thorough strategy might include: backing up raw data (though ideally source systems have their own backups), backing up the warehouse data itself, and backing up analytics artifacts (semantic layer definitions, transformation scripts, etc.).

As a data leader, you should be able to answer confidently: “When was our last backup, where is it stored, and have we tested restoring it recently?” If you can’t, that’s priority number one to fix.

Also read: Open source data platforms: benefits, risks, and how to make the right choice

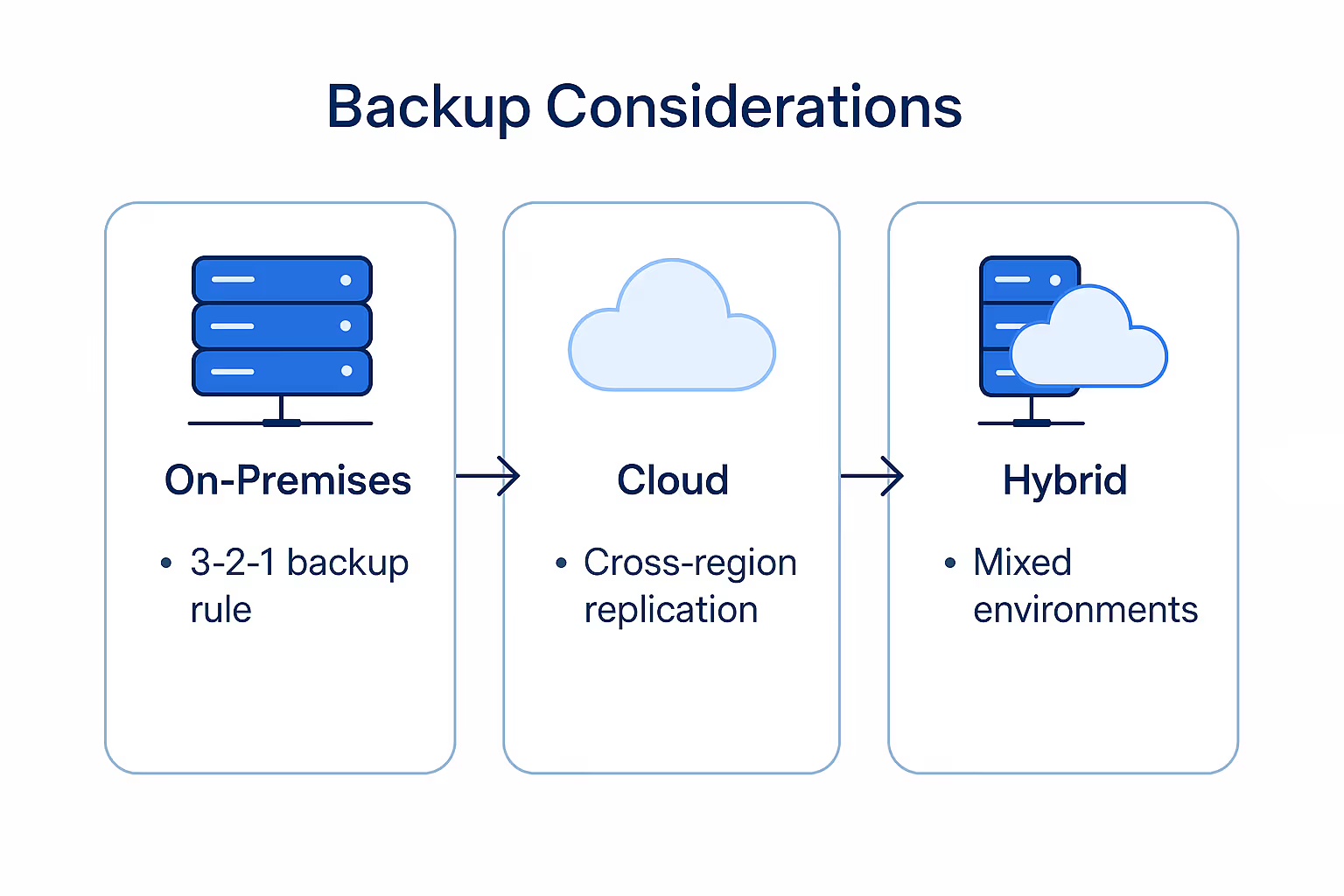

Cloud vs on-premises backup considerations

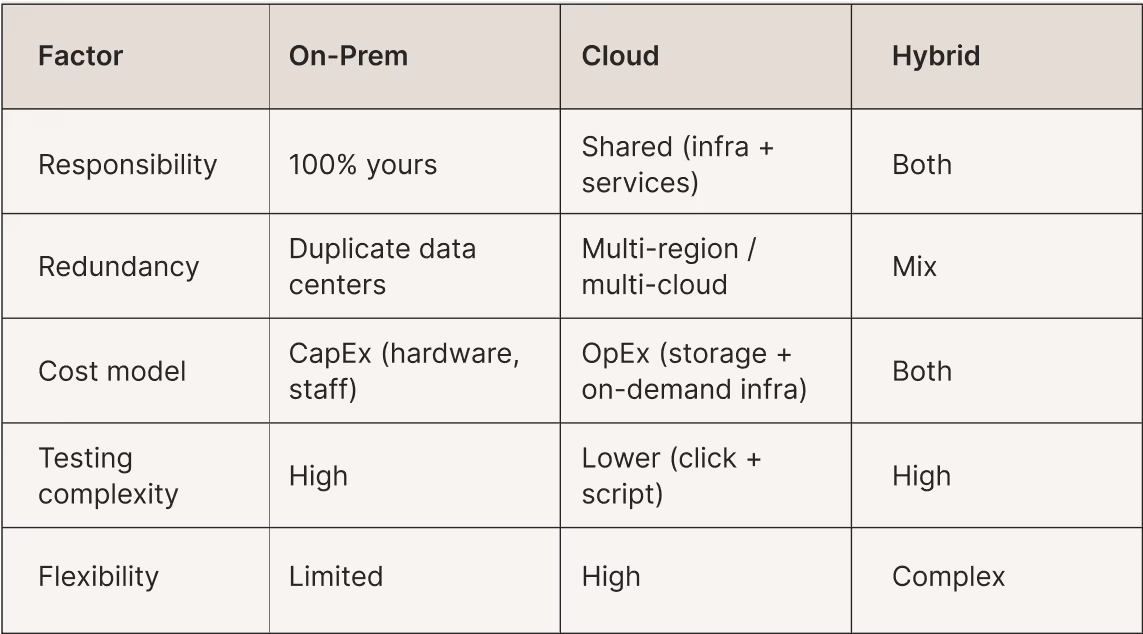

Your disaster recovery approach will differ depending on whether your data warehouse is on-premises, in the cloud, or a hybrid of both. Cloud vs on-premises comes with different tools, assumptions, and responsibilities. Let’s compare considerations for each:

#1 On-premises data warehouses

If you manage your own servers (in your data center or co-location), you are fully responsible for building in redundancy and backups. On-prem environments need geographic redundancy – e.g. replicating data to a secondary data center or at least storing backups off-site.

A classic best practice is the “3-2-1 backup rule”: keep 3 copies of your data, on 2 different media, with 1 copy off-site. For on-prem, that might mean production storage on disk, a second copy on local tape, and a third copy archived to cloud storage. Also consider hardware spares and failover capacity: do you have standby servers that can take over if the primary fails? If not, your RTO could be long (you’d be scrambling to procure and install hardware during a crisis).

#2 Cloud data warehouses

In the cloud (whether using Snowflake, BigQuery, Redshift, or a cloud data lake), you get some built-in durability, but you shouldn’t rely blindly on it. Cloud providers handle intra-region redundancy (disks and servers failing are handled behind the scenes with replication in most cases). However, region-wide outages do occur, so cross-region or multi-cloud strategies are important if you need high continuity.

The good news is cloud makes certain DR strategies easier: you can often enable continuous or frequent backups with a click, and storing backups in another region is as simple as copying to a different bucket. Cloud also enables on-demand failover infrastructure. Instead of maintaining a live secondary warehouse 24/7 (as on-prem), you might keep a replica that is inactive until needed or rely on infrastructure-as-code to spin up a new warehouse from backups quickly.

#3 Hybrid and multi-cloud

If your data platform spans on-prem and cloud, or multiple clouds, you gain resiliency (e.g. one cloud’s failure won’t take down everything) but must coordinate DR across environments. A hybrid DR plan might involve copying on-prem warehouse backups to a public cloud for safekeeping and quick spin-up.

Conversely, you might use on-prem storage as a backup for cloud data if compliance demands a local copy. Just ensure data formats and tools are compatible (e.g. if you export Redshift data to on-prem storage, can you easily load it into an on-prem database if needed?). Similarly, in multi-cloud deployments, you could replicate your data warehouse from Cloud A to Cloud B (for example, periodically unload data from BigQuery to cloud storage and sync to Azure Blob or AWS S3 for a parallel Snowflake instance). This can shield you from a total outage of one provider, albeit at increased complexity.

Also read: Optimizing data platform performance: faster, leaner, smarter

Testing your disaster recovery plan

Plans fail when they’re untested. Here’s how to avoid that:

#1 Simulate real disasters

- Schedule drills: shut down primary warehouse and fail over

- Restore from last backup to a staging environment and validate data

- Aim for live drills quarterly or at least annually

#2 Define roles clearly

- Who triggers failover? Who validates the restore? Who communicates to execs?

- Practice ensures coverage even when team members change

#3 Benchmark RTO/RPO

- Measure actual recovery time and data loss

- Compare results to your RTO/RPO targets

- Adjust backup frequency or infrastructure if thresholds are missed

#4 Test varied scenarios

- Site-level outage

- Ransomware wipe

- Silent corruption

- Each scenario has a different recovery path and risk profile

#5 Update documentation after each drill

- Capture lessons learned and version runbooks

- Update links, contact lists, and automation scripts

#6 Repeat frequently

- Re-test after infrastructure or data changes

- Each rehearsal improves your plan and strengthens resilience

Build resilience on a foundation you control

Disaster recovery for data warehouses may feel overwhelming, but with structured risk assessment, reliable backups, infrastructure-aware planning, and disciplined testing, even critical outages can become just temporary setbacks. At its heart, DR ensures business continuity—keeping insights flowing even when systems falter.

If you’re looking for a platform that supports flexibility and governance without compromise, consider 5X. Built on an end‑to‑end open‑source foundation, it delivers total data control, zero vendor lock‑in, enterprise‑grade security, and compliance readiness. These capabilities let your organization respond swiftly and confidently—without getting boxed in.

FAQs

What are the recovery models of a data warehouse?

What are the 5 steps of disaster recovery?

What is the difference between rto and rpo?

Building a data platform doesn’t have to be hectic. Spending over four months and 20% dev time just to set up your data platform is ridiculous. Make 5X your data partner with faster setups, lower upfront costs, and 0% dev time. Let your data engineering team focus on actioning insights, not building infrastructure ;)

Book a free consultationHere are some next steps you can take:

- Want to see it in action? Request a free demo.

- Want more guidance on using Preset via 5X? Explore our Help Docs.

- Ready to consolidate your data pipeline? Chat with us now.

How retail leaders unlock hidden profits and 10% margins

Retailers are sitting on untapped profit opportunities—through pricing, inventory, and procurement. Find out how to uncover these hidden gains in our free webinar.

Save your spot

%201.svg)

.png)