The ultimate guide to data warehouse optimization

.avif)

Table of Contents

TL; DR

- Slow data warehouses cost you time and money. If your analysts are waiting minutes (or hours) for queries to run, you’re losing agility and incurring unnecessary cloud costs.

- Optimizing your data warehouse improves query speed, reduces resource waste, and ensures your business intelligence systems deliver answers when you need them. Companies with strong data optimization practices report significantly faster decision-making and lower costs

- Implement proven data warehouse optimization techniques like indexing crucial columns, partitioning large tables by date or category, compressing data, using query optimization (like rewriting SQL or leveraging materialized views), and adopting modern architectures (columnar storage, parallel processing, etc.)

- Benefits: A leaner, faster warehouse means real-time analytics, happier users, and dollars saved. Optimized warehouses handle growing data without performance dips, enabling data teams to deliver insights faster

- 5X can help you achieve these gains quickly. It is built on an open-source foundation and deployable in your cloud or on-premises, so you keep full control of data while getting enterprise-grade performance

Your team is ready to make a critical business decision, but everyone is staring at a spinning wheel as a query churns through millions of rows. You’re collecting more data than ever, but slow queries, outdated reports, and bottlenecks drag everything down.

When dashboards take too long to load or reports arrive days late, opportunities slip away. If your data backbone can’t keep up, even the best analytics tools or AI models won’t deliver results on time.

In this post, we’ll paint a clear picture of the problem (poor data warehouse performance and its consequences) and then dive into practical strategies to fix it. From indexing and partitioning to query tuning and modern cloud architectures, you’ll learn how to speed up your data warehouse, cut costs, and streamline data processing.

Why you need to optimize your data warehouse

Without optimization, a warehouse can become a drag on your entire data analytics workflow. Here are the key reasons you should care about tuning your warehouse for performance and efficiency:

1. Faster insights = competitive advantage

Real-time data can be a game-changer. If your queries run slowly, decision-makers lose the ability to respond quickly.

High-performing warehouses ensure that reports and dashboards refresh in seconds, not hours, enabling agile, data-driven decisions.

For instance, companies with fast analytics infrastructure are much more likely to capitalize on fleeting market opportunities. On the flip side, a slow warehouse means missed chances; by the time you get the answer, the window to act may have closed.

2. Growing data volumes and user demands

Data warehouses are handling more data and more concurrent users than ever. As your business grows, so do the data volumes (think terabytes to petabytes) and the complexity of analytics (AI/ML workloads, complex joins, etc.).

Without optimization, performance will degrade non-linearly; queries that once took 1 minute could take 10 as data grows. Scalability is crucial.

Optimizations like partitioning and efficient data modeling ensure that even as data scales 10x, query performance remains steady. This is especially vital for Business Intelligence tools that many users query simultaneously.

3. Cost control

An inefficient warehouse is very expensive. Cloud data warehouses charge by storage and compute time, meaning every unoptimized query that scans gigabytes unnecessarily or every outdated partition that isn’t pruned translates to dollars wasted.

We typically see that the data warehouse is about 40% of the cost of an entire infrastructure. So, if the bill is about a hundred thousand dollars, about forty thousand goes to the warehouse.

~ Tarush Aggarwal, CEO, 5X

Secrets to slashing 30% of your data platform cost

Conversely, optimization techniques (like compressing data, filtering queries, or right-sizing compute resources) directly reduce your monthly bill. It’s not uncommon to save 20–30% on cloud data warehouse costs through performance tuning and cleanup.

4. Reliability and user trust

When the data warehouse is slow or frequently overloaded, end-users start to lose confidence. They might resort to exporting data to Excel or creating shadow IT solutions, which leads to inconsistent results and security risks.

Optimizing the warehouse improves system reliability; queries succeed within expected timeframes, and data is up-to-date. This reliability builds trust in the data platform. Users can confidently use centralized dashboards rather than each running their own extracts.

5. Modern analytics and AI require it

Newer use cases like real-time analytics, machine learning, and AI-driven applications are resource-hungry. If you plan to feed your warehouse data into an AI model or support interactive analytics, you can’t afford lag.

A classic scenario: a machine learning model retraining overnight fails because the warehouse took too long to supply data. High-performance warehouse practices (like caching results, using materialized views, or leveraging in-memory processing) ensure that even advanced workloads can be handled.

Traditional data warehouses often fall short here; many legacy systems were built for periodic batch reports, not today’s AI-powered demands. Optimization or migration to a modern architecture is needed to bridge that gap.

Also read: Manual vs. Model: The Smart Way to Assess Your Data and AI Maturity

8 Expert-proven techniques for data warehouse performance optimization

Each of these techniques addresses a specific performance pain point, and often the best results come from combining them.

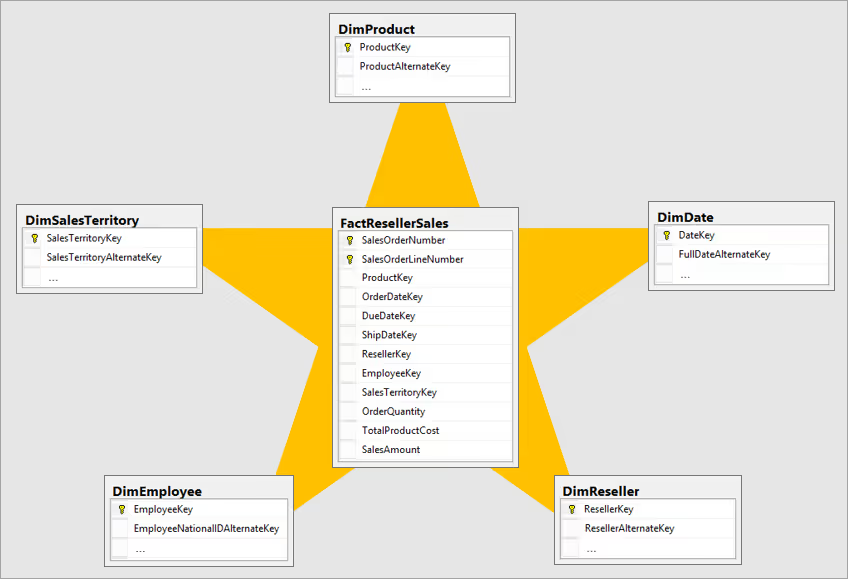

1. Data modeling and schema design (star schemas)

Start with a solid foundation: your data model. A well-designed schema can massively speed up queries.

The go-to approach in warehousing is the star schema: a central fact table (with transactional data) linked to dimension tables (with categories like date, product, customer, etc.). This denormalized design reduces the number of joins needed and simplifies queries.

Tip: Design your schema for the queries you need. If analysts frequently need sales by region by month, ensure “region” and “month” are dimensions directly associable with the fact table (perhaps via surrogate keys), rather than buried in a convoluted table chain.

2. Indexing strategies

An index is like a book’s index; it lets the system find data by a key without scanning everything. In a data warehouse, you should create indexes on columns that are frequently used in WHERE clauses, JOIN conditions, or grouping operations.

For example, indexing a date column on a huge fact table means a query for last month’s data can directly jump to that date range instead of reading the entire table. Indexes can dramatically speed up data retrieval, especially for selective queries.

Common index types for warehousing include B-tree indexes (good for range scans and equality on well-distributed data) and bitmap indexes (highly effective for low-cardinality columns in ad-hoc queries). Bitmap indexes, in particular, can accelerate complex filters on large fact tables with many possible filters (typical in data marts).

3. Partitioning large tables

Partitioning is a game-changer for managing big tables. It means breaking a large table into smaller sub-tables (partitions) based on a key, such as date, region, or some category. The database can then skip reading partitions that aren’t relevant to a query (this is called “partition pruning”).

For example, if you partition a fact table by month, a query for Q1 2025 will only scan the Jan, Feb, Mar 2025 partitions, not the entire table. This improves I/O and query times drastically.

Common partitioning schemes include range partitioning (e.g., date ranges), list partitioning (specific values go to specific partitions), and hash partitioning (spread data evenly for parallelism). Choose the scheme based on query patterns. Date/temporal partitioning is very popular since many analytical queries are time-bound.

Modern cloud warehouses often do automatic partitioning or clustering, but you usually still declare a partition key. Ensure new data consistently lands in new partitions.

4. Data compression and encoding

Storing data efficiently not only saves disk space, it also speeds up query execution by reducing I/O. Compression techniques can shrink your warehouse data footprints significantly (often 3-10x smaller).

When a query reads compressed data, it reads fewer bytes from disk, and many columnar storage systems can operate on compressed data directly in memory.

Most cloud data warehouses use columnar storage with compression by default (e.g., Parquet, ORC formats or proprietary like Snowflake’s micro-partitions). As a user, make sure to choose the right compression/encoding for each column if your platform allows it.

For example, a column of repetitive values might compress well with run-length encoding; high-cardinality text might need dictionary encoding, etc.

5. Query optimization and SQL tuning

Sometimes the biggest gains come from simply writing better queries. “Query optimization” means adjusting your SQL or the database’s execution plan to run faster. Modern query optimizers are quite advanced, but you can help them by following best practices:

- Avoid SELECT (star): Query only the columns you need. This reduces data transfer and allows better use of columnar storage

- Filter early and specifically: Use WHERE clauses to narrow data as much as possible, and avoid functions on columns in the predicate (which can disable index use). For example, do WHERE date >= '2025-01-01' instead of WHERE YEAR(date) = 2025

- Optimize JOINs: Use appropriate join types and make sure join keys are indexed or partitioned. Joining smaller subsets (after filtering) is faster than joining huge raw tables then filtering

- Reduce complex subqueries: Sometimes breaking a complex subquery into temp tables or using a WITH (CTE) can let the optimizer better plan execution. Also, prefer JOIN over IN or EXISTS in many cases for large data sets (depending on your system’s strengths)

- Aggregation pushdown: If possible, aggregate or pre-summarize data in the warehouse rather than in application code. Use database functions (like GROUP BY, window functions) which are optimized in the engine, instead of fetching raw data to process externally

6. Materialized views and result caching

For frequently run complex queries, nothing beats not running them at all. That's where materialized views and caching come in.

A materialized view is a precomputed result of a query, stored like a table. Instead of recalculating a heavy join or aggregation each time, the database can just read the precomputed result. This is incredibly useful for dashboards that hit the same summarizations repeatedly.

For example, a materialized view of “daily sales by product by region” can serve many reports instantly, whereas computing it from scratch could take minutes. Keep materialized views refreshed (either on a schedule or when source data changes). Many systems can automatically rewrite queries to use a matching materialized view behind the scenes.

Query result caching is a slightly different approach: some warehouses (like Snowflake, Oracle, etc.) will cache the results of a query for a short time. If an identical query is run again, it returns the cached result immediately.

Take advantage of this by not adding unnecessary randomness to queries (e.g. avoid NOW() in a query that’s run repeatedly if you can pass a constant instead, so the cache hits).

7. Parallel processing and scalable architecture

Modern data warehouses achieve speed through massive parallel processing (MPP), basically, splitting the work across many nodes. To leverage this, ensure your warehouse is configured to use its parallel capabilities.

This could mean increasing the cluster size (more nodes or cores for concurrency) or using features like clustering keys or distribution keys to spread data evenly. Distribute data across nodes (sharding) so no single node becomes a bottleneck for large queries.

For instance, in Amazon Redshift you choose a distribution style (even, key, all) to control how data is sharded; in BigQuery, the service automatically splits data; in Snowflake, you can scale up a “virtual warehouse” to more servers for parallelism.

8. Streamlined ETL/ELT and maintenance

Though not a “query” optimization per se, optimizing your ETL (Extract, Transform, Load) processes will indirectly boost warehouse performance and reduce costs. Efficient ETL means data arrives in the warehouse in the right form, ready for fast queries. Some tips:

- Incremental loading: Don’t reload entire tables if only a part changed. Use change data capture or incremental upserts. This keeps the warehouse lean and avoids large batch jobs that hog resources

- Staging and batching: Load data into a staging area and batch inserts/updates into the warehouse to avoid constant small transactions. Many small writes can fragment storage and slow queries; better to do one bulk load when possible

- Data quality and cleaning: Remove or archive obsolete data. As Alibaba Cloud’s guidance notes, regular data cleaning (removing duplicates, old records) frees up space and keeps queries fast

- Statistics and maintenance: Ensure the database’s query optimizer has up-to-date statistics on data distribution. Out-of-date stats can lead to poor query plans. Schedule analyze/update stats if your system doesn’t do it automatically

- Monitoring and alerting: Use monitoring tools to catch performance issues early. If a query suddenly slows down or a job spills to disk (exceeding memory), those are signs to investigate indexing or query rewrites. Proactive monitoring helps maintain performance over time

Tying it all back together

We’ve covered the basics: indexing, partitioning, query tuning, modern architecture. All of it matters if you want a warehouse that’s fast, efficient, and ready for AI. The problem is, keeping all those knobs tuned isn’t easy. Most teams don’t have the time or people to stay on top of it.

That’s where 5X helps. We don’t sell you a warehouse. We make sure the one you already have runs at its best. 5X connects ingestion, transformation, orchestration, and BI into one platform, and bakes in the optimizations that normally take months to get right.

Built on open-source foundations. Runs in your cloud or on-prem. No lock-in. No wasted spend.

FAQs

What is data warehouse optimization?

What are the best tools for optimizing data warehouses?

What are the recovery models of a data warehouse?

What are the 5 steps of disaster recovery?

Building a data platform doesn’t have to be hectic. Spending over four months and 20% dev time just to set up your data platform is ridiculous. Make 5X your data partner with faster setups, lower upfront costs, and 0% dev time. Let your data engineering team focus on actioning insights, not building infrastructure ;)

Book a free consultationHere are some next steps you can take:

- Want to see it in action? Request a free demo.

- Want more guidance on using Preset via 5X? Explore our Help Docs.

- Ready to consolidate your data pipeline? Chat with us now.

How retail leaders unlock hidden profits and 10% margins

Retailers are sitting on untapped profit opportunities—through pricing, inventory, and procurement. Find out how to uncover these hidden gains in our free webinar.

Save your spot

%201.svg)

.png)